Why Major Disasters Are Almost Never Caused by One Big Mistake

Most people assume disasters happen because someone made a terrible decision. An engineer ignored a warning.

A manager cut a dangerous corner. Somewhere, someone failed badly, and other people paid for it.

The reality is more uncomfortable. The engineers who built the Space Shuttle Challenger were among the best in their field. The plant operators at Chernobyl were experienced and trained.

The crew of the Deepwater Horizon rig were professionals following procedures. None of them set out to cause a catastrophe. Most of them had no idea one was coming.

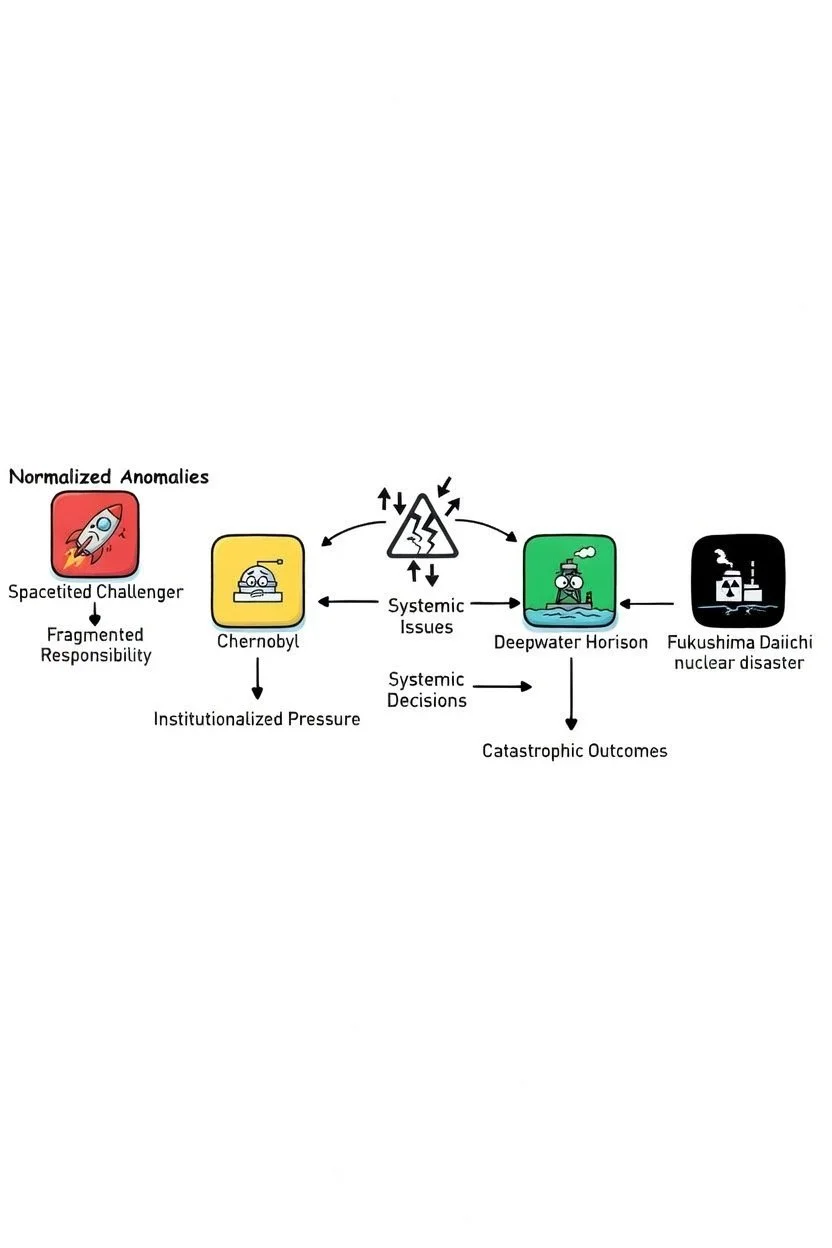

Modern disaster analysis has moved well beyond the search for a single bad actor. What researchers have found instead is a pattern that repeats across industries, decades, and continents.

Three mechanisms, working together, turn ordinary decisions into catastrophic outcomes.

Warnings become routine

In 1977, engineers at NASA identified problems with the O-ring seals on the Space Shuttle's solid rocket boosters. Over subsequent flights, they observed erosion and damage.

Each time, the shuttle returned safely. Each time, the anomaly was reviewed and reclassified as within acceptable parameters.

Sociologist Diane Vaughan studied NASA's internal records exhaustively after the Challenger disaster and named what she found the normalization of deviance.

When a warning sign repeatedly appears without leading to catastrophe, organizations stop perceiving it as a warning. It becomes the new baseline for what is considered safe.

By 1985, the worst O-ring blow-by ever recorded on a shuttle mission was reviewed, assessed, and deemed an acceptable risk. The language in official documents had quietly shifted from alarming to routine without anyone making a single decision to change it.

The night before Challenger launched in January 1986, engineers argued against flying in the cold temperatures forecast for the next morning.

NASA managers challenged the logic, effectively reversing the burden of proof: instead of requiring evidence the shuttle was safe, they demanded engineers prove it was not.

Management overruled the engineers. The O-rings failed 73 seconds after launch.

NASA repeated this pattern 17 years later with Columbia, normalizing foam shedding from the external tank across multiple flights until a piece of foam struck and breached the orbiter's wing. Seven crew members died on reentry.

Responsibility gets divided until nobody owns the risk

The Deepwater Horizon blowout involved BP as the well operator, Transocean as the rig owner, and Halliburton as the cement contractor. Each party had partial responsibility for the well's safety.

None had full visibility into what the others were doing.

Halliburton's cement slurry design failed its own internal stability tests but was used anyway. BP did not verify the cementing job independently.

Transocean's blowout preventer had a dead battery and a misaligned pipe.

The U.S. regulatory body lacked the technical expertise to catch any of this.

When the rig crew observed anomalous pressure readings during a critical safety test, they needed the test to succeed in order to move to the next phase of operations.

They accepted an unfounded explanation for the pressure rather than investigating it. Forty minutes later, the well blew out.

When accountability is divided across multiple organizations, each party tends to assume others are performing the necessary safety checks.

The result is that nobody is. Researchers call this diffusion of accountability.

Combined with the production pressure to stay on schedule and on budget, it creates a system where the cost of raising a concern is immediate and personal while the cost of not raising it remains hypothetical.

Expertise creates blind spots

Professional experience is typically treated as a safeguard. In complex systems under pressure, it can also be a liability.

Experienced professionals process information quickly, using pattern recognition built from years of repeated situations. This is efficient in routine conditions and dangerous in novel ones.

Confirmation bias, the tendency to interpret new information in ways that support existing beliefs, becomes stronger with expertise, not weaker.

At Deepwater Horizon, the rig crew had seen anomalous pressure readings before and had explanations for them.

When the critical pressure test showed signs the well was leaking, they applied an existing framework to explain it away rather than treating it as something new.

They were not careless. Their experience gave them a confident, plausible, and wrong answer.

At Chernobyl, Deputy Chief Engineer Anatoly Dyatlov's domineering confidence in his ability to manage the RBMK reactor led him to push operators past multiple warning signs during the test.

When the reactor power dropped unexpectedly, he ordered it raised back up even though the reactor was in a critically unstable configuration. He was certain he could control the situation. The reactor's physics were not.

At Fukushima, TEPCO and Japanese regulators had operated the plant safely for decades and concluded from this that their emergency preparations were adequate.

They had not prepared for a total station blackout because their experience told them it was not a realistic scenario. The 2011 earthquake and tsunami made it one.

What these patterns have in common

None of the three mechanisms above require bad intentions, incompetent actors, or even a single large mistake.

They require only that an organization operates under pressure for long enough that small anomalies get explained away, safety responsibility gets distributed across enough layers that nobody sees the full picture, and experienced professionals trust their pattern recognition in conditions that have quietly exceeded what their experience actually covers.

The chain of events at Chernobyl began days before the explosion, with a test delay that left the reactor in an unstable state, a decision to pull out almost all control rods to compensate, and a choice to run the test at a power level far below specifications.

Each individual step was either standard practice or a reasonable response to an unexpected condition. Their interaction produced a nuclear explosion.

Understanding these mechanisms matters because they are not unique to oil rigs, nuclear plants, or space programs.

They are present in any organization where production pressure accumulates over time, where responsibility for complex systems is divided, and where the people closest to the danger have the least power to stop it.

"The Greatest Violence Does Not Bleed" Women's Oversized Organic Cropped T-Shirt

A cropped tee that carries quiet menace and deep truth. The stark, powerful phrase "The Greatest Violence Does Not Bleed" is rendered in elegant, almost surgical typography—perhaps cracked like fractured glass, bleeding subtle ink drips, or set against a minimalist glitch-distorted background. It whispers (or screams) that real damage is silent, invisible, emotional, psychological—perfect for anyone who’s survived the kind that leaves no scars on the skin.

The wide, oversized fit and dropped shoulders deliver that signature glitch-queen / dark-academia / alt-streetwear energy—effortlessly cool when layered over fishnets, high-waisted cargos, leather skirts, or worn solo with combat boots. Made from 100% organic cotton, it’s heavyweight enough to feel substantial and structured, yet soft and breathable for all-day (or all-night) wear.

Ideal for poets, survivors, goths, manifestos-in-motion, late-night thinkers, or anyone who wears their quiet rage like armor.

Key Features:

100% organic cotton – sustainable, ultra-soft, and kind to skin

Heavyweight 7.08 oz fabric – premium weight with excellent shape retention

Wide oversized fit with American shoulder cut – modern, relaxed, baggy silhouette

Elastic 2x1 rib knit collar – holds form through repeated wears

Color-matched jersey neck tape + double-stitched hem and sleeves for durability

Satin side label + neutral inner size label

Size Guide (approximate in inches – normal fit; size up 1–2 for maximum oversized drama):

XS: Length 18.43 | Width 22.24 | Sleeve 7.44

S: Length 18.9 | Width 23.23 | Sleeve 7.68

M: Length 19.37 | Width 24.21 | Sleeve 7.91

L: Length 19.84 | Width 25.2 | Sleeve 8.15

XL: Length 20.43 | Width 26.38 | Sleeve 8.46

2XL: Length 21.02 | Width 27.56 | Sleeve 8.78

Wear your unspoken battles. Sustainable. Sharp. Unapologetic.

The question is not whether this pattern will produce the next disaster.

It is whether the organizations involved will recognize the pattern before it does.

He is Austrian, 6'5", speaks five languages, commands a $6 billion empire, and was once photographed at the No Time to Die premiere. The analysis has been done. The verdict is in.