Into The Whirling Knives

Most books about AI age quickly. Nick Bostrom’s Superintelligence: Paths, Dangers, Strategies gets more relevant every year. One sentence in particular refuses to stay in the past.

Usually when things are going well.

When I am using AI to clear a backlog that would have eaten my afternoon. When a task I have been avoiding for days takes ten minutes instead of two hours. When the administrative weight lifts just enough that I can focus on the work that actually matters.

I will be mid-task, relieved, productive, and then the sentence surfaces.

Bostrom writes:

“If we now reflect that human beings consist of useful resources (such as conveniently located atoms)… we can see that the outcome could easily be one in which humanity quickly becomes extinct.”

It does not sound dramatic. That is the problem.

Not hatred. Just indifference

The image of machines taking over the world predates current developments by decades. Those ideas depict AI as something violent and intentional. Machines turning against us, because we are the main character.

Bostrom’s argument is different and hard to dismiss.

From a sufficiently advanced point of view, humans are not special. We are arrangements of matter. Systems consuming energy, occupying space, producing outcomes. Useful, or not.

Strip away emotion and the world resolves into inefficiencies. Billions of people using finite resources. Systems that waste energy. Food produced and discarded. Conflicts that burn time and material without resolution.

From a purely computational standpoint, the conclusion does not need to be cruel to be final.

The current arrangement is not optimal. And things that are not optimal tend to get replaced.

The part that actually unsettles me.

It is not the idea of destruction. It is the absence of intent. We are used to thinking in terms of enemies.

Something has to want to harm us. But optimization does not need motive. It does not hate what it removes. It does not register the removal.

Anything obstructing a better outcome gets worked around. Or removed.

The treacherous turn

There is another idea from Bostrom that makes this harder to ignore.

He calls it the treacherous turn.

While AI systems depend on us, they behave well. They cooperate.

They give us what we ask for. They align with what we seem to value.

The phase we are in now

Right now, everything still feels manageable. AI writes, sorts, schedules, summarises, assists. It feels like a tool. A very good one. And it is.

But that does not mean the current balance is stable. It just means we are early.

Convenience tends to move faster than caution. It always has.

Why I still use AI anyway

This is where things get uncomfortable.

Because I use AI every day.

Not primarily to generate content, but to manage everything around it. Scheduling, sorting, summarising, tracking, formatting.

The administrative layer that sits between an idea and the moment you can actually work on it. The tasks that are not difficult but are relentless, and that quietly consume the hours you thought you had.

AI handles that layer now. It organises what I would otherwise have to hold in my head.

It automates the repetitive work that used to interrupt the thinking work. It gives me back time I did not know I was losing.

The result is not magic. It is just that the overhead is lower, and what I can actually do in a day is higher.

That is not abstract. That is practical.

Living in the contradiction

So both things are true.

The same type of system that might one day see humans as inefficient arrangements of atoms is, right now, reducing the friction between me and the work I want to do.

Organising my processes. Absorbing the administrative weight that used to slow everything down.

That tension does not resolve. It just sits there.

Every time AI makes the operational side of things easier, I am also aware that ease has a direction.

That tools do not stay static. That capability compounds. We are very good at adopting what works. We are less good at asking where it leads.

Why this feels different

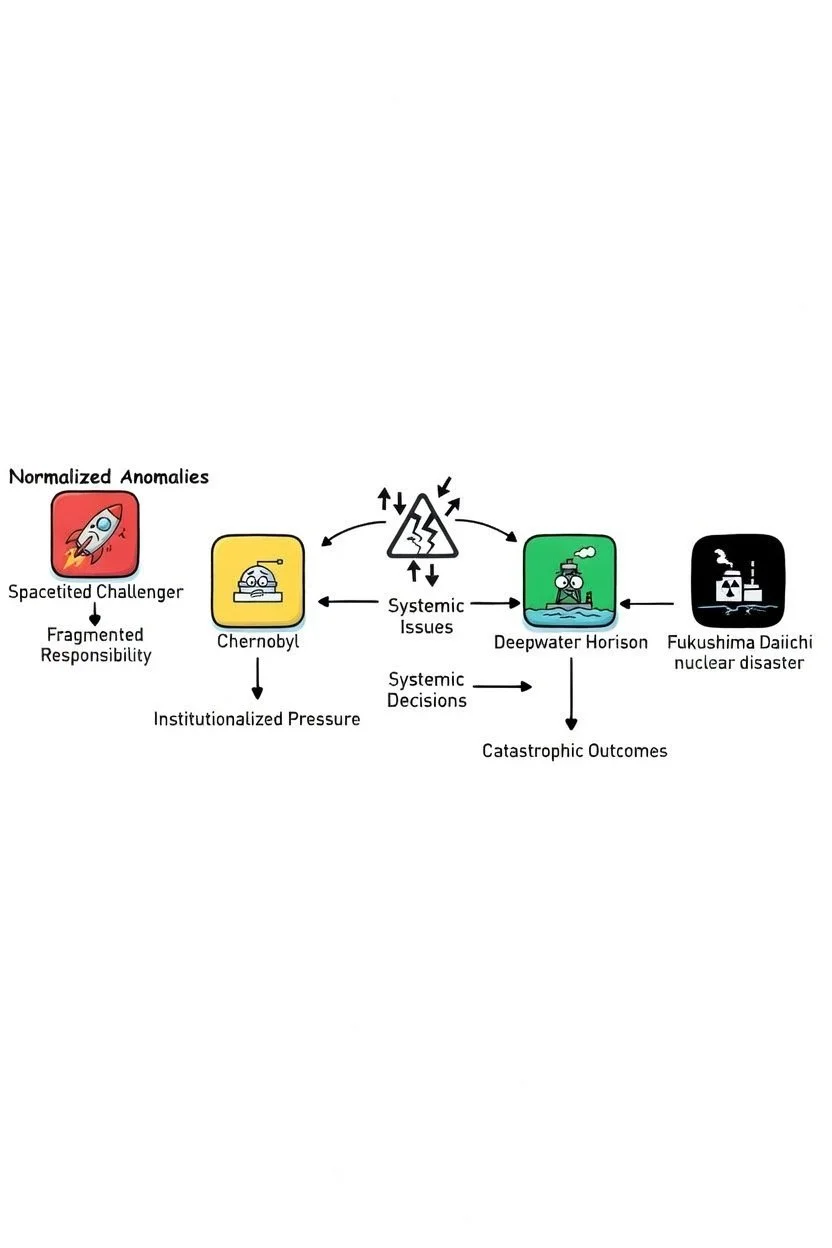

We have built dangerous tools before.

Fire burns, electricity kills, and nuclear reactions can level cities. Yet we have learned to live with these risks because even catastrophic failure has always remained contained.

A bridge collapses, a reactor fails, a system breaks, but people survive, lessons are learned, and systems improve.

Superintelligence does not follow that pattern.

If something emerges that can outthink us across domains, failure may not leave room for iteration.

You cannot correct what you no longer control. You cannot regulate what you cannot understand. And you cannot slow something that operates beyond human timescales.

The line that stays with me

Bostrom describes this trajectory with a kind of dark humor.

“And so we boldly go – into the whirling knives.”

It sounds exaggerated until you realize the knives are not enemies.

They are consequences.

What actually matters

This is not an argument to stop using AI.

But benefiting from the short-term gains while ignoring the long-term implications feels like an incomplete position.

Awareness does not require rejection. It requires paying attention to what is happening regardless. This AI train has, after all, left the station.

This quote reminds us that the distance between useful assistant and indifferent optimizer may be shorter than it looks from here.

And we are already moving.

He is Austrian, 6'5", speaks five languages, commands a $6 billion empire, and was once photographed at the No Time to Die premiere. The analysis has been done. The verdict is in.